Seit 2005 arbeiten wir im Raum Stuttgart getreu dem gestalterischen Grundsatz von Dieter Rams »less but better« erfolgreich für nationale und internationale Unternehmen (EU, China, USA), öffentliche Einrichtungen, Fotografen, Magazine, Galerien und Museen. Eine wesentliche Grundlage unserer Arbeit ist es, immer auf Augenhöhe mit unseren Kunden zu kommunizieren und jede gestellte Aufgabe mit der richtigen Mischung aus Kreativität und dem nötigen Realismus zu erfüllen. Gutes Design ist für uns: ehrlich – unaufdringlich – verständlich – innovativ – konsequent – emotional.

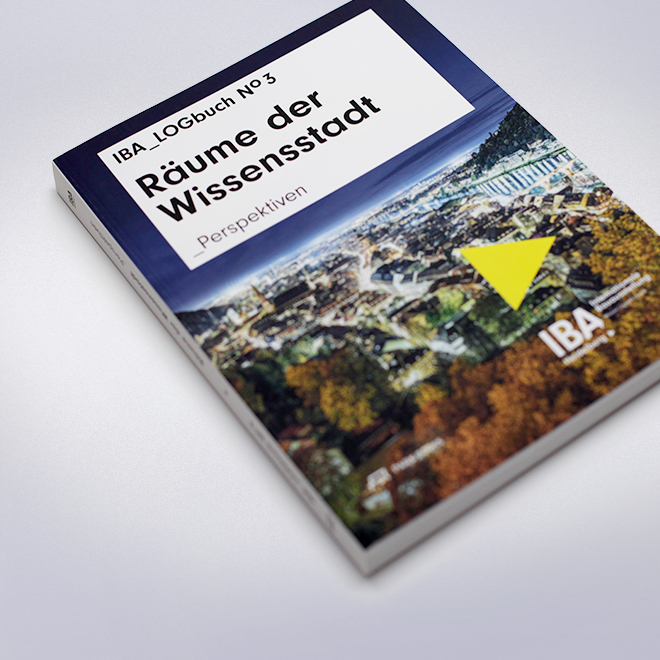

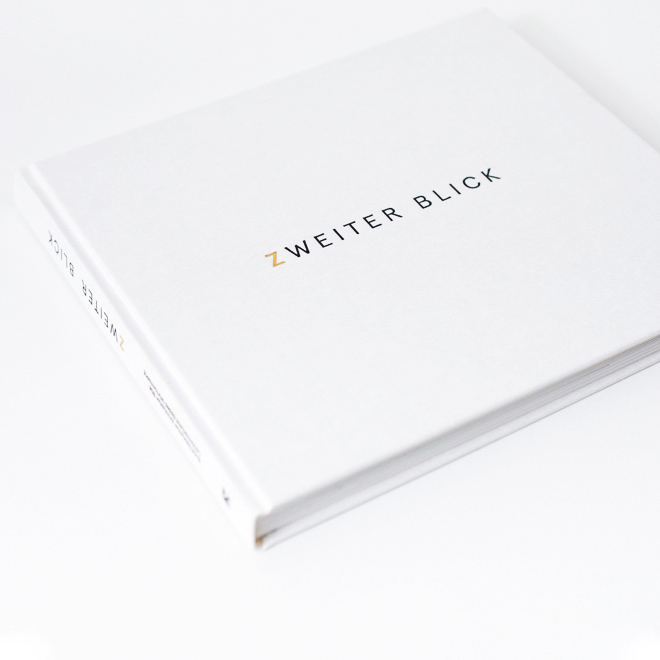

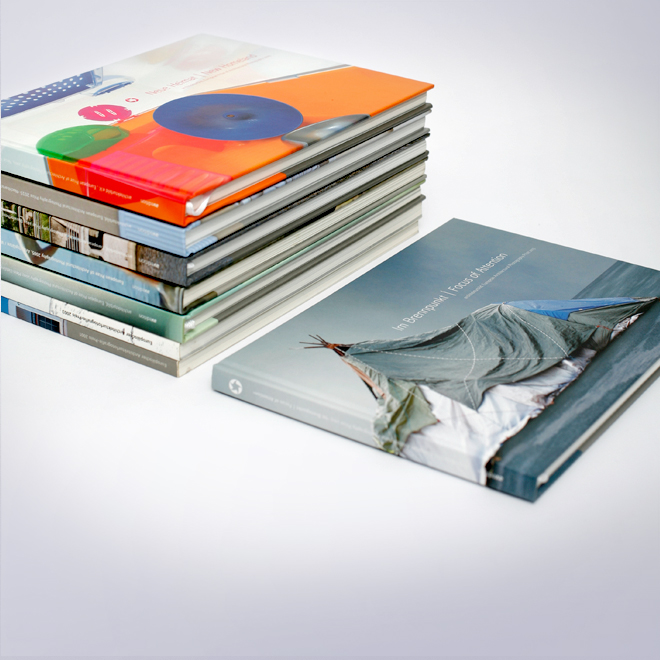

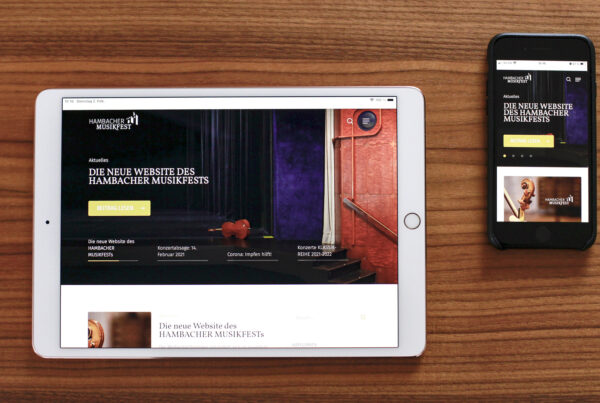

Unsere Kompetenzen liegen im Editorial Design (Buch- und Zeitschriftengestaltung), Corporate Design (Logo und Grafik Design) und verstärkt im User Interface Design (Automotive und Mobile Devices). Die Gestaltung unserer Arbeiten ist von einem Minimalismus geprägt, den wir neu interpretiert haben. Wir verstehen darunter keine unbedachte Reduktion, sondern vielmehr Minimalismus in Bezug auf die Einzigartigkeit der Umsetzung und Funktion im Detail. Wir möchten das zeigen, was es wert ist, gesehen, entdeckt und erlebt zu werden, Wiedererkennung durch eine individuelle Gestaltung und daraus resultierender sensibler Kommunikation Ihrer Identität.

Wir arbeiten konsequent, lösungsorientiert und zuverlässig an der Umsetzung Ihrer Vorstellungen – vom ersten Konzept bis zur finalen Präsentation.